Introduction: The Quiet Revolution in Design

There's a quiet revolution happening in design. You might have felt it already, that subtle shift where our relationship with technology has begun to change in ways that challenge our fundamental understanding of what design is meant to be.

For decades, we've designed digital experiences with a certain understanding: we create static interfaces that users navigate in predictable patterns. The interfaces might be complex, beautiful, intuitive, even delightful, but they remain, at their core, fixed artefacts waiting to be used.

But something profound has changed.

With the emergence of AI, we've crossed a threshold into a new design paradigm that Nielsen Norman Group calls "the first new UI paradigm in 60 years." We're no longer creating experiences to be consumed. We're architecting relationships to be nurtured.

Consider the difference:

Traditional Design:

- A fixed interface that responds predictably

- Users learn the system, but the system remains constant

- Success measured by efficiency and clarity of function

- The designer's vision remains intact through use

Relationship Design:

- A dynamic partnership between two learning systems

- Both human and AI evolve through interaction

- Success measured by the quality of collaboration

- The experience emerges through use, not before it

This shift requires us to think less like interface designers and more like relationship counsellors, creating the conditions for productive partnerships between humans and intelligent systems.

Trust: The Essential Foundation for Collaboration

In this new paradigm, trust becomes the essential foundation without which nothing else can flourish. Much like Maslow's hierarchy of needs establishes physiological requirements as the base for human development, trust forms the bedrock upon which all meaningful AI relationships must be built.

Without trust, users naturally limit their engagement, restrict their inputs, and maintain the kind of vigilance that prevents true collaboration. The profound nature of this requirement cannot be overstated: without trust, even the most sophisticated AI capabilities will remain underutilised or rejected entirely.

Recent research from the University of Melbourne and KPMG (2025) reveals the magnitude of this challenge: only 46% of people globally are willing to trust AI systems, despite 66% using AI regularly.

Put another way, 54% of users do not trust your AI at face value. You need to earn it.

This "trust gap" isn't merely an academic concern but the fundamental challenge that will determine whether AI fulfils its transformative potential or remains a limited, constrained technology.

Understanding Trust Formation in AI Relationships

The Psychology of Trust in AI Interactions

Recent research published in Frontiers in Psychology (April 2024) demonstrates that trust in AI follows similar psychological patterns to human relationships, requiring both perceived competence and perceived benevolence. However, AI faces unique challenges in conveying benevolence, as it lacks the emotional signalling that humans naturally produce.

The research reveals that just as in human relationships, trust in AI develops through stages and requires consistent signals that indicate both capability and positive intentions.

The Dual Trust Challenge: Users and Organisations

Trust in AI isn't just about user adoption. It's equally about organisational adoption. While designing for end-user trust, we must simultaneously address the trust concerns of stakeholders within our organisations.

McKinsey's 2024 research shows that while 78% of organisations now use AI in at least one business function (up from 55% a year earlier), only 1% of business leaders report their companies have reached AI maturity. This disparity signals that organisations themselves are struggling with trust in AI systems.

Leaders are concerned about risk, compliance, and potential harm, and rightfully so. A 2025 Pew Research Center study found that executives' attitudes toward AI remain deeply cautious, with industry leaders significantly more concerned than excited about increasing AI adoption (51% concerned vs. 11% excited). The research further reveals that while 95% of executives believe AI will impact their industry, only 17% believe this impact will be "very positive", a striking indication of the trust gap at leadership levels.

By framing design decisions within a trust framework, designers can address both user and organisational trust challenges simultaneously.

Designing for the Inevitable: Trust Through Graceful Failure

The Reality of AI as Prediction Machines

In the rush to showcase AI capabilities, many teams overlook a fundamental truth: AI systems are fundamentally prediction machines, and predictions, by their very nature, will sometimes be wrong. Errors aren't exceptions. They're an inherent characteristic of these systems.

This reality requires a profound shift in our design approach. Rather than designing only for success scenarios and treating errors as edge cases, we must acknowledge that failures are inevitable and design for graceful recovery as a core feature.

The most sophisticated AI experiences don't attempt to achieve perfect performance (an impossible standard), but rather focus on:

- Setting appropriate expectations about the probabilistic nature of AI

- Making uncertainty visible when confidence is low

- Providing graceful failure modes that maintain user agency

- Creating transparent remediation paths when errors occur

- Building feedback loops that demonstrate learning from mistakes

Trust in AI systems doesn't come from perfection. It comes from appropriate confidence, transparent limitations, and resilient responses to inevitable errors. The system that acknowledges its limitations often engenders more trust than one that presents an illusion of infallibility only to disappoint later.

Trust Recovery Patterns

Research from the International Conference on Intelligent User Interfaces (Kahr et al., 2024) found that trust significantly decreases after AI errors but can be rapidly restored if the system demonstrates consistent performance afterward. Later errors (after establishing performance history) cause less severe trust loss than early errors.

This insight reveals the importance of building trust equity early in the relationship that can buffer against inevitable failures. It also suggests specific design patterns for trust recovery:

- Immediate Acknowledgment: Recognising the error quickly signals honesty

- Clear Explanation: Providing understandable reasons for the failure demonstrates transparency

- Actionable Alternatives: Offering other pathways maintains user agency

- Performance History Reminders: Contextualising the error within overall reliability

- Learning Commitment: Showing how the feedback improves future performance

These patterns transform error states from relationship destroyers into relationship development opportunities.

The Trust Journey Framework

Mapping the Trust Formation Process

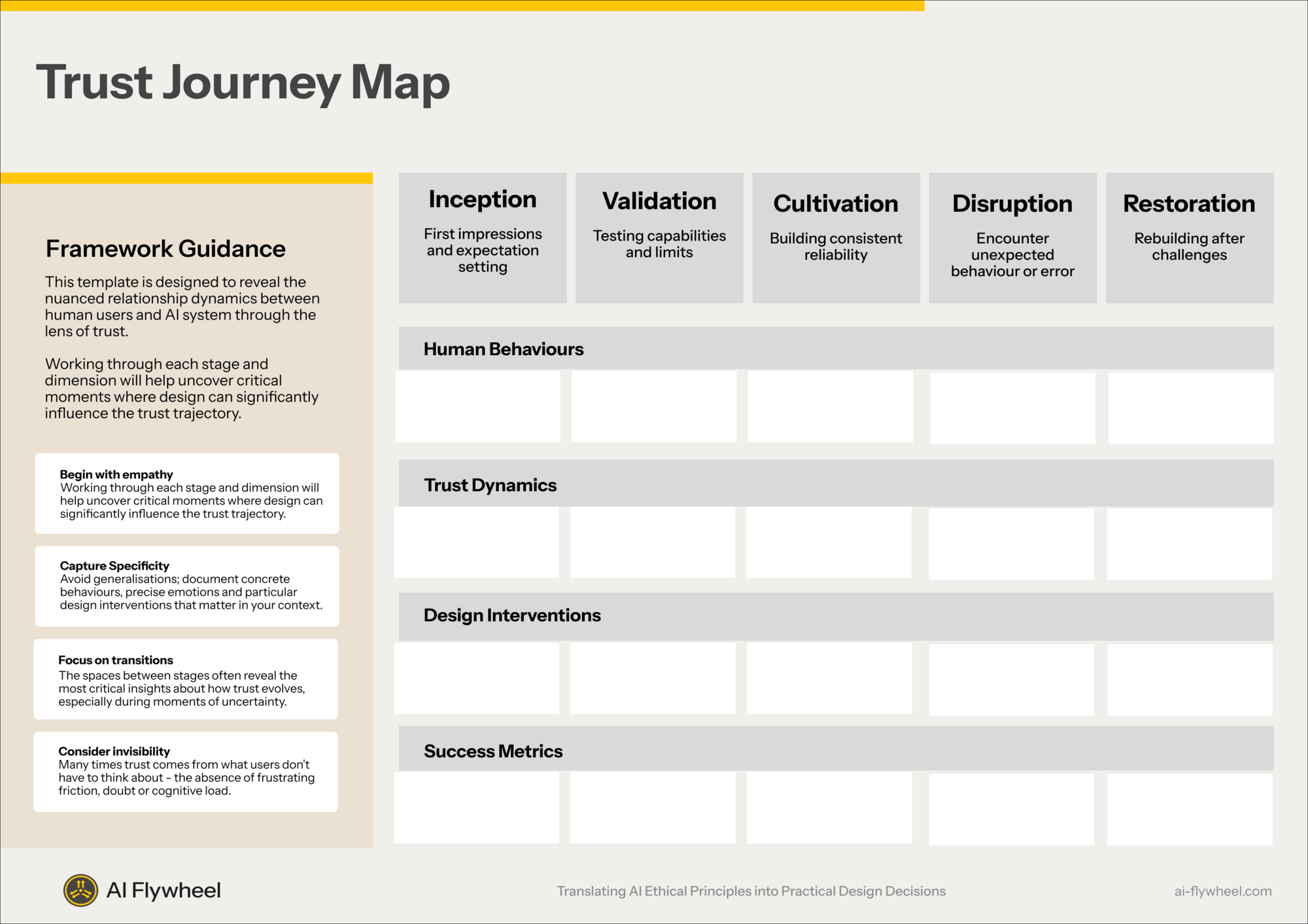

The Trust Journey Framework is a practical design framework developed by Riley Coleman at AI Flywheel that maps how trust forms, evolves, breaks, and recovers in AI systems. It is built on one of the most powerful reconceptions in this new paradigm: that trust isn't a binary state but a relationship that evolves over time through specific touchpoints and experiences.

Forward-thinking design teams are now mapping trust journeys to identify critical moments that shape the user's confidence in AI systems.

This framework reveals how different design interventions are needed at each stage:

- Trust Formation: Creating appropriate first impressions, setting clear expectations of capabilities and limitations, and giving users early granular control over data being used

- Trust Testing: Supporting users as they actively verify AI capabilities

- Trust Building: Delivering consistent good performance to build confidence

- Trust Challenge: Gracefully handling errors or unexpected behaviours

- Trust Recovery: Designing effective system responses to failures

Understanding these stages allows designers to identify critical moments, moments where trust is most fragile and the right design intervention can make the difference between adoption and abandonment.

Design Actions for Each Trust Stage

First Use: Design for Transparent Onboarding

- Capability Disclosure: Clearly communicate what the AI can and cannot do

- Limitation Transparency: Explicitly state boundaries and limitations upfront

- Privacy Controls: Provide meaningful privacy settings with clear explanations

- Value Demonstration: Show immediate value with simple, successful first interactions

- Expectation Setting: Set realistic expectations about AI performance and learning

Early Usage: Design for Verification

- Progressive Disclosure: Gradually introduce complexity as user confidence grows

- Low-Stakes Testing Grounds: Create safe spaces for experimentation

- Visible Confidence Indicators: Show how confident the AI's decision is

- Feedback Collection: Actively solicit user impressions during early interactions

- Quick Wins: Design initial experiences that showcase reliability in controlled contexts

Regular Usage: Design for Deepening Confidence

- Consistency Patterns: Establish predictable interaction rhythms

- Performance Dashboards: Show improvement metrics over time

- Personalisation Evidence: Make adaptation to user preferences visible

- Progressive Controls: Offer increasing customisation options as expertise grows

- Relationship Memory: Reference past interactions to build continuity

Error Encounters: Design for Graceful Recovery

- Transparent Explanations: Explain what went wrong and why

- Corrective Pathways: Provide clear actions to remedy the situation

- Learning Signals: Show how the system is improving from mistakes

- Human Backstops: Offer access to human assistance for critical failures

- Apology Mechanics: Acknowledge failure with appropriate tone

Continued Use: Design for Relationship Building

- Evolving Capabilities: Demonstrate new competencies based on usage patterns

- Trust Reinforcement: Periodically remind users of system reliability statistics

- Collaborative Memory: Build shared history that deepens over time

- Value Tracking: Help users see the cumulative benefit of their AI collaboration

- Agency Calibration: Adjust autonomy levels based on established trust

The Personalisation Paradox

The Fine Line Between Intimacy and Intrusion

Hyper-personalisation represents both the greatest opportunity and the most significant risk in AI relationship design. When done respectfully, personalisation creates a sense of being truly understood, a fundamental human desire. When it oversteps boundaries, however, it can trigger an immediate breach of trust that's difficult to repair.

Recent research in human trust psychology confirms that respecting boundaries serves as a foundational trust signal. In AI relationships, this suggests that personalisation should follow explicit consent rather than pushing boundaries.

Trust Signals in Personalisation Design

The way personalisation is implemented sends powerful signals about the system's trustworthiness:

- Opt-in by Default: Requiring explicit consent signals respect

- Contextual Recommendations: Suggestions that arrive at the right moment signal attentiveness

- Privacy-Preserving Processing: On-device processing signals data respect

- Preference Validation: Confirming inferred preferences signals transparency

- Personalisation Explanations: "We're suggesting this because..." signals honesty

The Gracefully Degraded Alternative

A critical principle in Trustworthy AI design is providing a gracefully degraded experience for users who choose to prioritise privacy over personalisation capabilities. This approach ensures that privacy-conscious users still receive value from your product without feeling penalised for their choices.

By designing your system to function effectively at various levels of data access, you create a more inclusive product that respects the full spectrum of user privacy preferences while still delivering core value.

Trust Testing Methodologies

Beyond Traditional User Testing

Traditional UX testing focuses on usability and functionality, but relationship design requires testing for trust. This means developing new methodologies specifically for evaluating the quality of AI relationships.

Trust-Specific User Testing

Effective trust testing requires protocols designed to measure the psychological dimensions of the AI relationship:

- Expectation Mapping: Before first use, document user expectations about AI capabilities

- Trust Interaction Analysis: Observe when users override AI suggestions or double-check results

- Trust Challenge Simulation: Intentionally introduce errors to observe recovery

- Longitudinal Trust Tracking: Measure relationship development over extended periods

- Trust Breaking Points: Identify exactly where personalisation crosses from helpful to intrusive

Trust Metrics for Executives

To build organisational support for relationship-centred design, metrics must speak to business outcomes:

- Failure Recovery Success: % of users who continue engagement after errors

- Relationship Deepening Rate: Progression through trust journey stages

- Trust-Based Feature Adoption: Usage of features requiring high trust

- Long-Term Retention Correlation: Connection between trust indicators and retention

- Trust Gap Index: Difference between expected and actual user trust levels

- Transparency Engagement Rate: Usage of explanation features

- User Override Frequency: How often users reject AI recommendations

- Trust Recovery Time: Sessions needed to restore trust after failures

Building the Business Case

For design leaders to secure investment in relationship-centred approaches, the business case must be compelling. Fortunately, recent research provides powerful evidence:

- Deloitte's 2024 research found organisations taking trust-related actions were 18% more likely to achieve expected benefits. Benefits include improved products and services, increased innovation, higher efficiency and productivity, reduced costs, faster development, increased revenue, enhanced customer relationships, and better risk detection

- Leading organisations investing in comprehensive trust measures achieve 10.3x ROI, that is 3 times the average company that invests in Generative ROI of 3.7x.

- Harvard Business Review research shows companies demonstrating ethical AI practices experience 30% higher customer retention rates compared to competitors with either questionable or invisible AI ethics.

This economic reality creates a powerful argument for elevating relationship design from a "nice to have" to a strategic imperative. Trust isn't merely a user experience concern. It's a fundamental business driver.

First Steps for Design Leaders

Design leaders can begin the transformation to relationship-centred design with these concrete actions:

- Conduct a Trust Journey Audit: Map your current AI experiences against the Trust Journey Framework

- Establish Trust Metrics: Implement basic measurements for relationship quality

- Conduct a Trust Focussed User Test of your existing experience as a baseline

- Update your Design System with Transparent AI components that build trust

- Prioritise Trust Building design changes and continue to test as trust builds and changes over time

- Invest in Relationship Design Training: Build team capabilities in this new discipline

These steps provide a practical roadmap for beginning the transformation while building organisational support for the broader vision.

The Future of Relationship Design

This isn't a matter of adding a few new techniques to our existing toolkit. It's about fundamentally reimagining what design means in an era where technology becomes a collaborative partner rather than a passive tool.

The future belongs to those who understand that when we design AI experiences, we're not just designing interfaces. We're designing relationships. And like all meaningful relationships, they must be built on a foundation of trust, with the resilience to recover when things inevitably go wrong.